To the IMAX Moon and Beyond

The Moon landings were real — we faked it later.

On September 23, 2005, Magnificent Desolation: Walking on the Moon in 3D released on giant IMAX screens. A 4K stereo3D experience of the Moon landings, and speculative missions from the past and future — which at the time was IMAX’s widest opening stereo3D film with 70 prints worldwide. Produced and narrated by Play Tone/Tom Hanks, directed by Mark Cowen, with Cinematography and Visual Effects supervision by Sean Phillips. The film was honored with the first Visual Effects: Special Venue award by the Visual Effects Society in 2006.

The movie displays many stereo innovations now considered standard, three years ahead of the digital 3D revolution following Avatar. Sassoon Film Design (SFD), with ten years of large format stereo VFX and Conversion experience, collaborated with the filmmakers converting Apollo 16 Moon images. In conjunction with animations from a personal project by John Knoll of Apollo 11 landings, this test was part of the initial pitch that green-lit the film. Several other VFX companies and individuals contributed to the final production, such as Digital Dimension (DDLA), Scott Simmons, and John Knoll once again. Several of these vendors spoke of their methodology in the film, and large format movies in general at a Los Angeles chapter of SIGGRAPH presentation on June 13, 2006.

This multi-part article is based on the 2006 presentation, focusing on the work at Sassoon Film Design, and from John Knoll and his team, which endeavored to make the most accurate visit to the Moon since 1972. It is broken into these sections, and will be published over the coming weeks:

Part 1: Prep and Landing —Preparing for Stereo3D in 2005 and Beyond.

Part 2: Strolling on the Moon — Stereo3D Methodology and Innovations.

Part 3: Touchdown — Moon landing Simulation by John Knoll.

Part 4: Flight Time — The Lunar Flight Simulator of Paul Fjeld.

Magnificent Desolation: Lunar Excursion Sequences

Part 1: Prep and Landing

In this article we cover the live action photography, stereo challenges, and image preparation prior to the full stereo3D visual effects. The latter is often an under-appreciated, under-budgeted, under-rated section of 3D film production, but is the spine on which the entire show rests. This article explores the nitty gritty of 3D stereo acquisition and preparation.

The Challenge

The major challenge of Magnificent Desolation in 2005 was re-creating a well known historical event in the most immersive, and punishing film format at the time — 4K IMAX stereo. Most of the post production industry recently completed the transition to the High Definition standard (1920 pixels wide), which closely mirrored the 2048 pixel resolutions common in film production. Several articles in magazines lamented the extra computing cost the new standard required. With a horizontal resolution of 4096 pixels, the image area of an IMAX frame is 4x larger and therefore harder to compute. Stereo3D production compounded the difficulty of this film, as did the computer systems barely designed to calculate this level of imagery.

Stereo 3D, with left-eye and right-eye image sequences, is not merely twice as much work. Each image must be exactly the same in color, edge treatment, green screen extraction, and visual geometry. Stereo3D at IMAX resolution, with movie screens up to 22 m wide, are unforgiving. A half-pixel stereo misalignment can either sink characters into the ground, or leave them floating magically above the surface. Simple blurs and basic compositing tricks standard to the industry must be completely rethought to work properly in-depth. With myriad other considerations, including the scrutiny each image will have at that scale, the effort to make this film was one of the most significant challenges — as the VFX teams individually would discover.

Unfortunately, there is not a lot of high quality imagery from the Moon landings. There is little room on a tin-foil protected spacecraft (the Lunar Module) for high quality movie cameras and film. Television cameras mounted on-board were very low resolution, with limited bandwidth (not even in color), and all the high resolution stills that do exist were taken by people with only general photographic training. Film sources were either stills taken through a Zeiss prime lens on grainy 70 mm stock with Hasselblad cameras, or 16 mm film that ran at 12 FPS (once again to conserve weight). All these negatives were locked in vaults, and would need to be scanned at higher resolution than what was available — requiring special permission. The final scanned pictures were low contrast as the negative had faded due to the nearly 35 years since the missions flew. A few Internet sites were useful with historical records of the photos, and orthophoto maps of the landing area, but no refined height or geometric data existed from the Moon (it was 2005 after all). A sparse collection of data would have to suffice.

Recreating the geography of the Moon was difficult with such little information, but that was only one of the first concerns. The Moon also has the equivalent of 1/6 earth gravity, and that has to be simulated on Earth. This fact means all objects onscreen need to fall slower to the hard surface of the Moon, including fully operational Lunar Rovers, and every speck of dust. As dusty as the surface of the Moon is, almost every footstep would be a visual effects challenge in stereo3D.

The production hired Astronaut Dave Scott (who drove an electric car on the Moon) as production consultant — reprising this collaboration from the HBO series. This raised the pressure on the VFX crews to maintain historical and scientific accuracy as much as possible in the given time constraint — especially since he was on set with production, and never in a VFX facility for consultation. How the Moon looked, and how people and objects moved upon it was under scrutiny from experts, not just audiences.

.

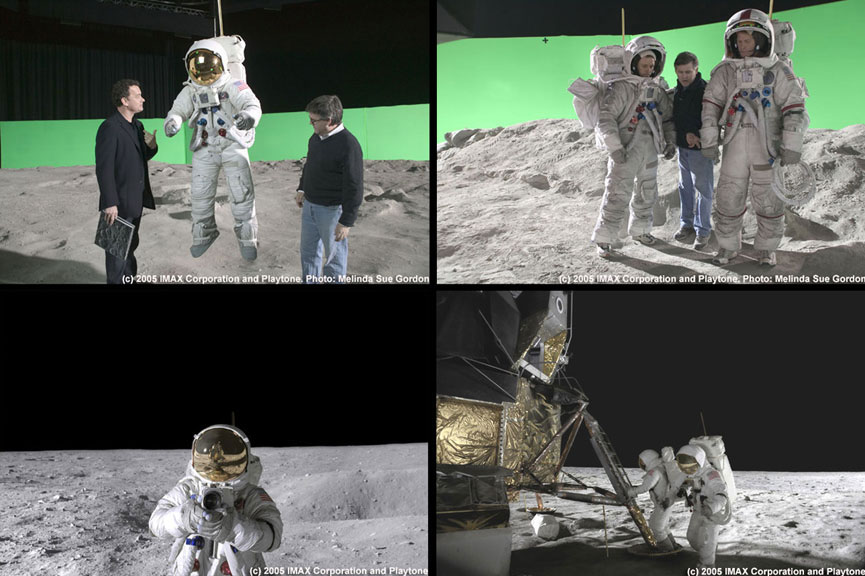

Live Action Photography

Stage 27 on the Sony Studios, Culver City lot was converted to a Lunar surface by laying down several meters of sculpted concrete over foam blocks. This was dressed with very fine crushed granite dust, which had the annoying property of sticking to everything — much like the real lunar dust. A full scale Lunar Module recreation was leased from a museum, and placed in the center of the set. To simulate the sun, an array of tightly packed HMI lights were hung on a crane arm, and raised nearly to the roof at one end of this massive stage. Unfortunately, as large as stage 27 is, it cannot fully depict the lunar surface. There would have to be seamlessly integrated set extensions, so a 20 foot tall green screen was erected around 3/4 of the stage perimeter, with the rest of the set blacked out in the hope that this would only contain wires. (More on that later.)

Custom space suits fabricated for each of the actors to approximate the real ones, were just props — no cooling or bathroom facilities built in — which contained no pressure bubble (as there was also no oxygen generators), just an outer helmet, often obscured with black tape and tracking markers. They did, however, have a feature the real astronaut versions did not: a built-in harness and connection to an overhead wire flying rig to lift the actors and simulate lower gravity. All these artifacts needed to be added or removed digitally, and any missing pieces repaired in stereo3D, which is a challenge to this day.

The photography for Magnificent Desolation was a departure from the norm for IMAX films at the time. IMAX films, and especially 3D stereo films moved the camera as little as possible, or in slow establishing shots. It was considered that the IMAX screen was a proscenium to the world, and at the scale of the projected film, moving cameras, standard blocking, and fast editing tricks were considered too jarring.

Most films in the IMAX 3D format were documentaries, as the cameras are incredibly heavy, so a locked-off camera was considered the best way to work. Digital processing of scanned images available since the early 1990s allowed for lighter weight cameras, and more motion for 3D films. But rather than hold back on conventional camera moves, and framing for technical or budgetary reasons, Magnificent Desolation embraced fluid cameras, with standard subjective, and over the shoulder shots to help personalize the journey for audience members. Cameras on cranes with long sweeping arcs, closeups with shallow focus, and vast environments are visible throughout the show.

While on the subject of photography, it is standard practice to shoot in slow motion to depict miniatures as large objects, or humans in microgravity. This results in the humorous effect that everyone in space moves very slowly — a film convention mocked by James Bond in Diamonds are Forever. More successful films use wire work to depict “weightlessness,” with 2001: A Space Odyssey claiming the most successful pre-digital rendition of it.

Magnificent Desolation hired many of the physical effects crew from the HBO miniseries Earth to the Moon for this wire work. The television series (also produced by Hanks), filmed in an old aircraft hangar, using large balloons filled with helium to offset the weight of the actors (It even used miniatures for the VFX). The method seemed like a good approach at first, but it turned out to be more unruly than the filmmakers had hoped. This time standard track and wire rigs, connected to a single point disguised near the antenna sprouting from the back of the astronaut’s backpack, attached to counter-weights simulated low gravity. Slow-motion was only employed during scenes of the fully functioning prop Lunar Rover, as connecting it to wires as it drove around was prohibitive.

There were no 4K digital cameras in 2005, so two 8-perf Vistavision cameras were slaved together in a custom beam splitter-based stereo rig. This behemoth could traverse more than 5 inches inter-ocular (or inter-axial) distance — the physical distance between the two lenses — but because of the beam splitter arrangement could collapse zero inter-ocular, turning into a 2D image on-the-fly. The camera system could also converge, or turn each camera independently to look at a specific point in space.

Stereo Convergence is a standard method to put an image at the screen plane in the 3D theater, but is generally not used in IMAX productions, which favor leaving the cameras parallel. Parallel cameras provide a more realistic sense of depth, as the images rarely sink into the screen (negative parallax), but always come forward to the audience (positive parallax), despite the fact that some IMAX theaters would de-converge their projectors. However that is a whole other discussion. Theoretically, this camera arrangement produces images that are easily controlled, and numerically accurate. But in practice aligned cameras are flummoxed by gravity, momentum, lens differences, polarization, and mechanical systems.

Stereo filmmaking strives, and requires precision. Nature pushes back.

Image Processing and Preparation

No stereo photographed image is ready to use out of the box, especially for visual effects.

Color:

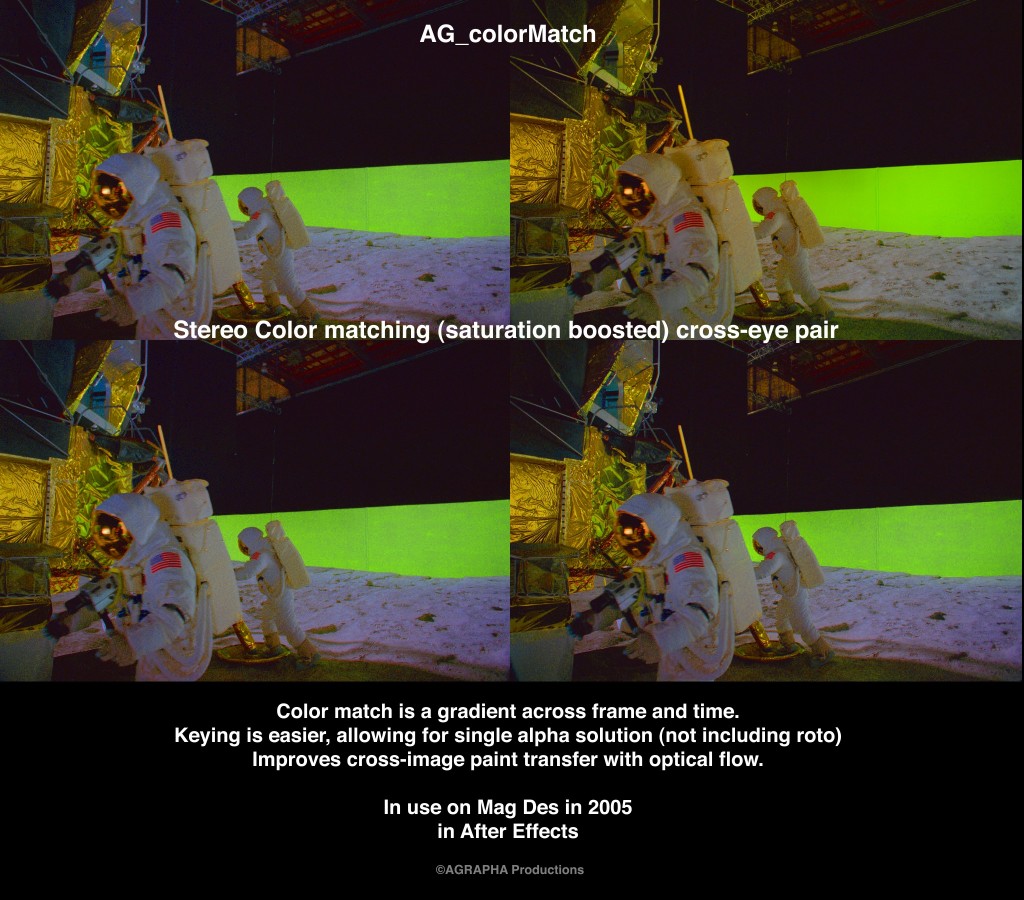

At the time one of the most challenging aspects of stereo filmmaking was color matching the two different images. Despite all the preparation, film stock matching, and identical processing it is unlikely that both images will have the exact same color. This problem is exacerbated by beam splitter rigs, as the images are affected by the neutral density of the mirror, and polarization of both reflection and color.

The standard solution to stereo color differences in 2005 was to have an artist carefully match the color grade using histogram and saturation tools by eye. Unfortunately, the color grade in stereo images is not always a simple overall correction, but often requires gradient masks to adjust to non-static variations in color. Non VFX shots used this method, but this status quo color is fraught with difficulty when it comes to green screen compositing, as each image needs the EXACT procedure applied to both eyes to avoid stereo anomalies. Matching the color with such a manual process plagued many of the visual effects vendors, resulting in a lot of extra work.

At Sassoon Film Design, stereo color matching was solved a year earlier using a proprietary tool created by the Compositing Supervisor. It is now a standard method for the industry. This automatic color correction tool matched values in the left image by analyzing the other ‘eye’. Bleeding edge research at the time coming from SIGGRAPH, relied on histogram matching to balance two images, but was often temporally unstable. This method rather used stereo disparity analysis, and complex compositing methods to automatically transfer the color difference, and stabilize the balance (more on this in the forthcoming What Color is Your Cookie Monster article).

There were other color anomalies in the footage seemingly caused by the lenses themselves which introduced unwanted fringing in the final composites. Looking at the problem, the team noticed that the red channel of the RGB images delivered by production was microscopically larger than the other two color channels. Whether it was caused by the film, lens, or the scanning was unclear. Scaling the red color channel three one-hundredths smaller to match the other two channels created a very clean image, and solved the fringed color problem.

Since the movie is shot on film, there were also steps to eliminate dust and scratches, as well as removing any radial gradient introduced by the lens. It was the goal at all times to present the cleanest image devoid of any film related artifacts.

All the proprietary solutions significantly reducing composite time at SFD.

Time was not kind to the original negatives, and the team had to restore them. The color stock had faded heavily, leaving a sickly green cast to every image, with little ability to create a nice, red, white, and blue. The images required individual color balancing to match prints and promotional shots that have circled the world for decades. Once restored color was graded and approved, another three step process was prepared to degrade the modern film stock back to a similar, though not quite identical look. The color correction would be applied as the last step before images went to film. In this way the final product could cut back and forth to actual photos from the Moon — once again raising the bar of historical accuracy by direct comparison.

Stereo Geometry:

Technically a stereo image requires that a feature in one eye must be vertically aligned with the matching feature on its twin, so there is more to stereo image preparation than merely matching the color. Misaligned images are stereo anomalies which strain the eyes of the viewer. Stereo filmmaking uses two lenses that are theoretically perfectly aligned. As mentioned before, gravity, physics, and human error fight against this perfect plan, and the physical geometry of the final image is slightly askew from its stereo match. At IMAX resolution, a slight misalignment could literally be (headache producing) feet apart. As uncomfortable as this is to the viewer, it can make stereo set extensions and visual effects a near impossibility, unless the cameras are exactly recreated, or images properly aligned.

The process of aligning stereo images is called Rectification, and every shot needed it. For Magnificent Desolation the right eye was considered the “prime” image, as it was not reflected and filmed upside down. It’s flopped, color corrected relative was scaled, and rotated on a 3D card until the images lined up to reference lines as much as possible — as it was a manual process. Using the 3D card was a newer technique at the time in the film industry, but fairly common in research circles. Each shot went through several versions of this manual process, and was sent back as necessary until the geometry lined up. With two color corrected, rectified images the team was almost ready to proceed.

Better rectification methods were developed in the following years, and will be covered in a forthcoming article on Stereo Rectification.

Noise and Grain:

This IMAX feature was photographed on 35 mm 8-perf VistaVision cameras on film. The images were scanned at roughly 6K resolution to improve sampling, but were later scaled to 4K resolution for production and final delivery, and filmed out on 15-perf 70 mm film. Earlier tests producing visuals at 6K resolution broke every piece of 32-bit compositing and rendering software thrown at it, as RAM was more limited in computer systems at the time. Despite that, 70 mm film has a tighter grain structure than VistaVision stock, so every image required further processing after color correction to bring the grain structures into alignment.

Noise reduction techniques vary widely, but there were very few methods available at the time that were not locked behind proprietary vaults. SFD once again used a proprietary system built by the Compositing Supervisor (2003). This de-graining method used a spatio-temporal bilateral filter which averaged like pixels between frames, while protecting areas of large motion (it is interesting to note that someone proposed the exact same method developed in 2003 in 2005 in a SIGGRAPH paper). The noise reduction system used on the film combined motion vector warping to improve the results, which removed a significant amount of grain from the stereo pairs, after which 70 mm grain structure was added in the final composite.

Tens of thousands of frames were prepared with all these processes before any visual effects began for Magnificent Desolation.

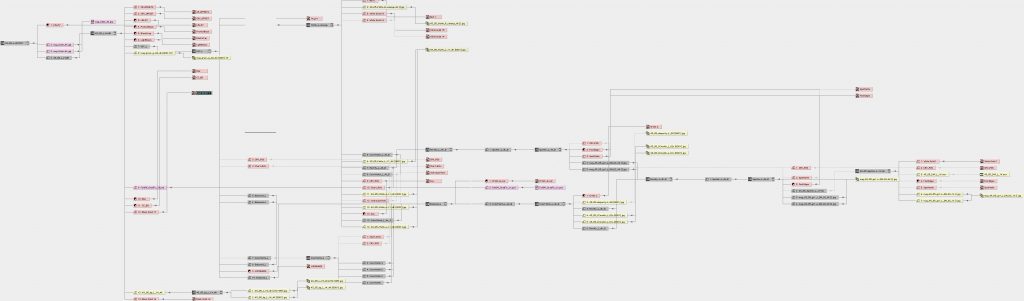

Programming and Computing

Preparing for the Visual Effects challenge was underway during principal photography. In 2005 Sassoon Film Design was an Apple Macintosh, and Adobe After Effects production facility, with 12 single core computers. Additional rendering support was provided by Van Ling Productions, and Agrapha Productions, delivering an all-around 23 core rendering ability. Half of these machines doubled as artist workstations, so most rendering was done after-hours. SFD created 50 shots in less than four months with a team of 6 to 12 artists, compared to the 40 artists and half the number of shots over an 8-month schedule at another facility. It was absolutely necessary to make process as efficient as possible to keep up.

One of the main methods to improve the pipeline was to establish common naming and compositing structures, and custom javascript code to build many composites. A template is a reasonable approach, but with the sheer number of processes involved, and color matching research only understood by a few of the key artists, a code based solution reduced a lot of complexity to the click of a button. Custom code was also written for data exchange between multiple packages. The main 3D tools for the production were Maya and Electric Image Animation System. The 3D software also required a lot of custom code — custom shaders, mel scripts, and particle systems all initially developed with as footage came online.

Taking a clue from the production team on the Star Wars prequels, each shot went through a layout procedure that populated templates, and put together full composite structures once footage was scanned. Although eventually responsible for final shots, the contribution by this splinter team was crucial. They also were involved in processing archival footage for several montage sequences, and for managing final output and color wedges (a process in which several color choices are printed to film, and evaluated on a light table for final color decisions). Since the team was integrated in ingest, backup, layout, and output, they had a broad understanding of the show requirements, and quickly understood the rigors of 3D film production. As the layout phase ended the artists moved into the full compositing team.

There were no web based tools for tracking shots and notes at the time (Shotgun was several years away). For an earlier production, the SFD team built a local web hosted page which kept record of latest versions of shots, and all notes from a screening session. This was refined for stereo3D production, and to view individual image streams, and anaglyph renders of composited shots. Each artist could review the notes in the custom built web archive on their shot at any time, and refer back to earlier versions to compare notes.

Go Fever

With all of this preparation in place, weeks worth of compositing work, custom color correction tools, and mostly prepared composite structures, the actual “dirty” work was ready to begin. For most visual effects projects, the extra steps necessary to align footage, and properly prepare it to be worked with are much simpler, but easier to recover from if an error is made. This kind of preparation is easily one quarter the expense of a stereo3D production, but is folded into the overall cost of the visual effects. All of the production time on-set ensuring proper 3D filmmaking —an absolute necessity — are not always enough to deliver a properly aligned and useable product.

The team was ready to go to the Moon.

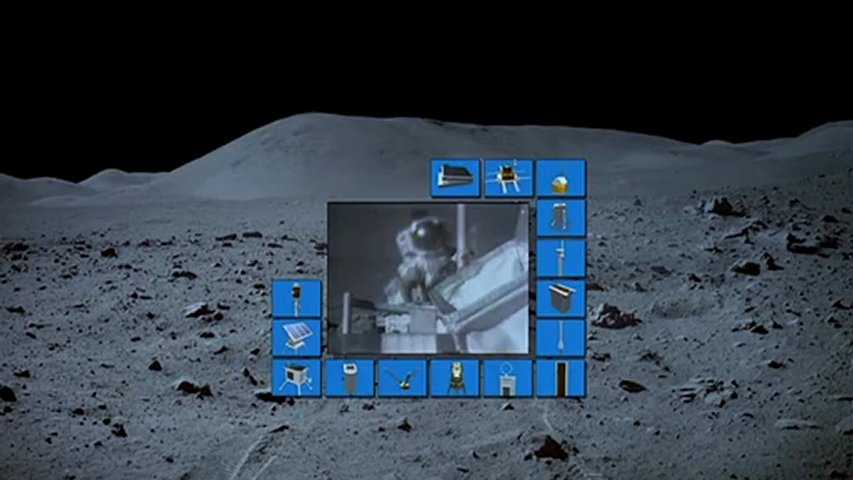

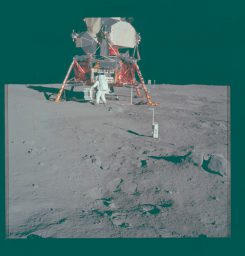

One eye of the original stereo conversion test that helped green-light the film. (not presented in 3D here)

AG

Section 2: Strolling on the Moon – With Sassoon Film Design

Pingback: How We Faked the Moon Landing: Part 2 — the Visual Effects of Magnificent Desolation - AGRAPHA Productions

Pingback: How We Faked The Moon Landing: Part 3 — The Visual Effects of Magnificent Desolation - AGRAPHA Productions

Pingback: How We Faked The Moon Landing: Part 4 — The Visual Effects of Magnificent Desolation - AGRAPHA Productions

We would like to invite Chuck Yeager back to the fold, as he seemed to have blocked AgraphaFX on social media once he read the title of this article.

The moon landings happened. I faked them 40 years later. That’s what this is about.

Pingback: The AVATAR Adventure in IMAX3D – a short review. – AGRAPHA Productions