Writing Code For VFX Solves Problems, and Motivates Others

It is my supposition that everyone in the visual effects business should learn to program, even a little bit, as it opens avenues that were once barriers. Whether writing the code, or just “breaking the code,” it is necessary to expand your skill set while solving problems, and look for solutions outside your immediate surroundings.

Several of my inspiring career moments came from people writing software for visual effects — those whom I jauntily call “Code Breakers.” These particular programmers changed how I thought about solving VFX challenges, and altered my approach to this day. Early in my career I saw their work and realized “THAT was my solution (or at least part of it was). The crux of a good VFX career is to effectively learn how to solve problems, and that’s what they did. It is one thing to learn the buttons and a piece of software, and a completely different thing to be able to solve a problem and create software or methodology yourself — are you “driving the car,” or inventing it?

With any creative endeavor, there is a “Lightbulb moment” — a time when a solution is obvious. That moment can repeat over a series of years, and on different occasions. The work of these programmers opened my mind to possibilities I had not considered at the time, which led me to further discoveries of my own. It is an interesting exercise to look back, and see what motivated your own work, and it is something which we will now explore.

A Story About Lightbulbs

![]()

I first attended the SIGGRAPH conference in 1995. Attending the conference seemed to be the “big thing” in the VFX industry, and in those days it was easily as large as ComiCon. Being my first year I mostly checked out the show floor full of vendors, thinking that is where the action was — the full conference seemed too expensive, but I knew one of the speakers on the RenderMan course that year. He snuck me in.

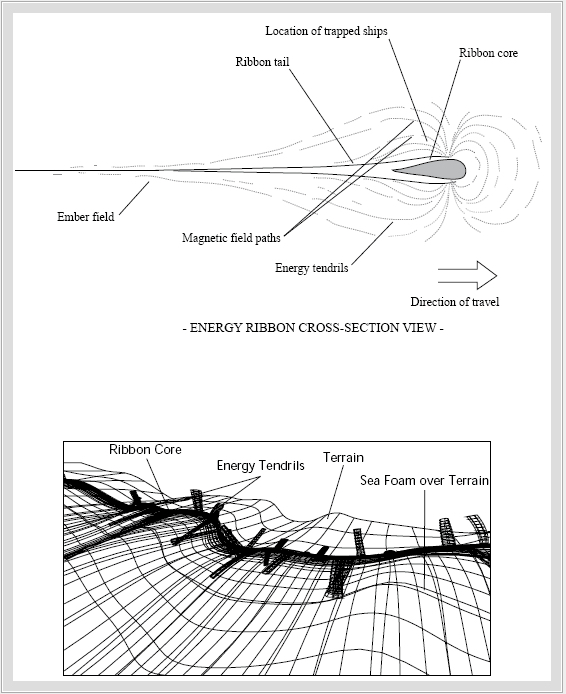

The room was packed, and enthralled with the visuals from Industrial Light and Magic, including Star Trek Generations — where ILM created the Energy Ribbon Effect of the Nexus, and fully CGI starships.

Normally ILM was tight lipped about their procedures, but here they spoke freely (here are synopsis of their course[1][2][3]). Ellen Poon addressed the crowd, and relayed that she was at first wary to the idea of using code to create art, but once she saw the result her mind was changed. She evangelized that with mathematical code an artist can create beautiful works of art — exclaiming “I love [Perlin] Noise!” The ILM work presented by Her, Joe Letteri, and Habib Zargapour inspired me to get back into coding. I finally bought the Renderman Companion at one of the bookstores on the show floor, instead of just thumbing through it, and started to teach myself a workable knowledge of the Renderman Shading Language (A program which only cost $99 at the time on Macintosh computers — at another booth)! At the time there was no using Renderman without knowing how to code, and if I wanted to work like ILM, or with them, this is the tool I needed to know.

What was most fascinating to me about the ILM team was the method they used of breaking the problem down to smaller problems, and solving each of those individually. This is a standard task management approach, but it was straightforward, removing the mystery from computer graphics. Seemingly simple solutions that combined together produced complex and compelling results. Very much the philosophy of visual effects production identified by Dennis Muren on The Empire Strikes Back. Unlike all of the text books and computer graphics programming books that spoke in fantastically technical language, here were artists using the tool like filmmakers. They spoke in a language that I understood far more than the mathematicians. Practical application of software engineering. Fantastic!

Within the next year I was at BOSS Film Studios, and sat near the programming staff. I was in the Matte Painting department, but I also did some experimentation with displacement and perlin noise shaders to create “Gardner” clouds in one of the early attempts to build a digital cloud bank (Read the post on Couch Clouds to see how this was eventually done). At the time most of the code used to create images was created in-house, and hooked into the many Alias or Wavefront products on Silicon Graphics Onyx machines. All those computers in the digital department were a significant investment (especiallly in those days), and it was a great opportunity to experiment on them. In many ways one could still define their own job — a lot like my college experience. It was still the early days of digital, and we were still figuring it out.

There were so many smart people at BOSS (Bob Mercier wrote a full ray tracer in a web browser for fun in 1996), but I was particularly impressed with the work of Maria Lando, who wrote (in her own words) “a motion tracking module for high precision 2D and 3D camera-object motion recovery — designed and developed a unique algorithm for camera motion reconstruction with no prior information regarding scene geometry” also made a set of software for recreating clean plates from filmed footage. Jamie Price, the VFX supervisor on Air Force One [AFO], introduced me to the idea of reducing noise in an image by averaging similar frames, but Maria took it one step further when she applied her 2D tracking engine to image reconstruction. She would track frames, and given a matte for the offending image feature, could automatically fill-in the missing parts and average them on a moving plate. This was the first time I had seen such a program. It was as if I looked upon magic. How many times had I done this by hand, and there it was, a fully automatic paint-in algorithm that worked (for the most part, depending on the footage). I couldn’t wait to use it in the AFO Hijack sequence for Matte Paintings. (Programmer Joe Alter made a similar set of tools at BOSS for Cliffhanger, so I am not sure of the complete genesis, as these individuals collaborated often).

There were so many smart people at BOSS (Bob Mercier wrote a full ray tracer in a web browser for fun in 1996), but I was particularly impressed with the work of Maria Lando, who wrote (in her own words) “a motion tracking module for high precision 2D and 3D camera-object motion recovery — designed and developed a unique algorithm for camera motion reconstruction with no prior information regarding scene geometry” also made a set of software for recreating clean plates from filmed footage. Jamie Price, the VFX supervisor on Air Force One [AFO], introduced me to the idea of reducing noise in an image by averaging similar frames, but Maria took it one step further when she applied her 2D tracking engine to image reconstruction. She would track frames, and given a matte for the offending image feature, could automatically fill-in the missing parts and average them on a moving plate. This was the first time I had seen such a program. It was as if I looked upon magic. How many times had I done this by hand, and there it was, a fully automatic paint-in algorithm that worked (for the most part, depending on the footage). I couldn’t wait to use it in the AFO Hijack sequence for Matte Paintings. (Programmer Joe Alter made a similar set of tools at BOSS for Cliffhanger, so I am not sure of the complete genesis, as these individuals collaborated often).

It was in this instance that I realized the power of 3D and 2D tracking (a relatively new science at the time in VFX), and motion extraction. I was a basic and novice Renderman coder, and had written some games and fractal explorations over the years. Maria’s work was beyond my coding abilities at the time, but I was determined to put this capability in my tool chest. So instead of teaching myself advanced image processing basics, I broke down the core concepts, and implemented the methodology in 2D using Puffin’s Commotion painting software, which was a tool like Photoshop for moving images (written by ILM staffers on their own time, just like Photoshop was). I have continued using this process through several different compositing packages over the years, and even implemented my own code to automate the setup and limit it by time-based bilateral filtering.

About that same time I was at BOSS the Grafica Obscura website was published by Paul Haeberli. In its articles were radical ideas of adding light, automatic stitching, and automatic focus reconstruction from several photos. It was clear that Paul was thinking beyond the current trend, and the site is a record of that forward thinking. With a mixture of code, and process I began to implement any of the ideas Paul suggested I could, but more with process than programming — which kept it limited. Most of the artists at BOSS could not code like him, and the programmers were very busy, but what little we could do inspired by his work was always worthwhile. His website is still useful reference.

About that same time I was at BOSS the Grafica Obscura website was published by Paul Haeberli. In its articles were radical ideas of adding light, automatic stitching, and automatic focus reconstruction from several photos. It was clear that Paul was thinking beyond the current trend, and the site is a record of that forward thinking. With a mixture of code, and process I began to implement any of the ideas Paul suggested I could, but more with process than programming — which kept it limited. Most of the artists at BOSS could not code like him, and the programmers were very busy, but what little we could do inspired by his work was always worthwhile. His website is still useful reference.

Turning on the Lightbulb

Electric Image Animation System introduced their own version of a shading language in 1998, written in C. It was my major 3D program at the time, so I started writing my own commercial shaders, and determined to go to full SIGGRAPH conference to increase my knowledge. The conference is at once intimidating and inspiring. One can see the magic, understand the concepts, but not necessarily understand all the math — all directly from the people that can best explain it. I am always looking for a jump-off point, and writing my own software product, I started implementing some of my own ideas. Books by Ken Musgrave, Tony Apodaca and others who write about graphic algorithms, along with college math books for courses I never took informed me. Now I had to take Renderman concepts, and move them into a different language while I was still learning it. I also had to explore the limits of this shading language, and see how to work around them without writing extra plug-ins as well.

One of the big challenges at the time with the software (later rectified) was that you could not load any texture maps into the shader API, as it was handled as a basic part of the program. Procedural shaders normally apply to an entire piece of geometry, unless they are limited by texture maps, or some other function. To solve the problem I introduced the idea of REACTIVE shaders, which would read the surface color below to control the final shading. Soon I had implemented the shader into the layer system of the software, and created phantom layers by temporarily storing data in unused color channels, or by painting the color of the geometry in another package. With these simple tools it was possible to create complex shading networks, without relying on the developer to change their software for my particular use. Rather than build one giant shader for a single purpose, I made building blocks of basic shader functions, and a few black box shaders to round out specific effects. By coding smartly, and using the existing layer system, I did not always have to write code to solve the problem, but my code was the core component. The benefit of writing your own solution became abundantly clear.

Other programmers who wrote much more complex shaders for Electric Image, later moved on to work directly for Pixar, and may have implemented some of the ideas we shared in REACTIVE technology to fake subsurface scattering in Finding Nemo by painting control maps directly on to the geometry (they termed more correctly, scalar fields. I at least like to entertain some inspiration for the solution, but it is a supposition — they are very smart). I also wrote Electric Image shaders to render different sized specular highlights on a per channel basis, that were used by The Rebel Unit for the chrome spacecraft at the beginning of Attack of the Clones — as a favor. All this from merely attending a talk at SIGGRAPH in 1995.

Successful software of my own just fed a greater hunger to see what others did with code, and SIGGRAPH became a mine of information. One persons’ approach, with a few tweaks to fit my pipeline, may be exactly the right solution. You have to go to a place where all the best minds in your field collect together, and try to understand them. Over time the movement of radical thinking with visual images soaks in, and stirs your own creative process. I like to approach this inspiring wellspring of information as if they are bricks with which I can build my toolset. It is now one of the events I look forward to the most every year.

The Lightbulb Explodes

The SIGGRAPH 2001 Conference in Los Angeles is likely one of the most impactful in my career. Firstly, Paul Huston of ILM displayed the digital matte painting work from Phantom Menace, and Space Cowboys — camera projection methods (rendered in Electric Image) which inspired much of my work, especially on the interactive menus for the Star Wars DVDs. Though not a coding example, it was the reason I was in my chair.

The same afternoon, in the same room, J.P. Lewis presented his course Lifting Detail from Darkness. The description was rather dry for what was about to be revealed : “A high-quality method for separating detail from overall image region intensity. This intensity-detail decomposition can be used to automate some specialized image alteration tasks. ” J.P. was one of the first people to publish a 3D tracking algorithm, and as I later learned, the principal coder of the tracking engine used in the Commotion software I was so fond of in my pipeline (to this date, still the best 2D tracking software I have ever used). FXGuide put together a history of 3D tracking, that is well worth the read. Both J.P. and Joe Alter are covered in the column.

The same afternoon, in the same room, J.P. Lewis presented his course Lifting Detail from Darkness. The description was rather dry for what was about to be revealed : “A high-quality method for separating detail from overall image region intensity. This intensity-detail decomposition can be used to automate some specialized image alteration tasks. ” J.P. was one of the first people to publish a 3D tracking algorithm, and as I later learned, the principal coder of the tracking engine used in the Commotion software I was so fond of in my pipeline (to this date, still the best 2D tracking software I have ever used). FXGuide put together a history of 3D tracking, that is well worth the read. Both J.P. and Joe Alter are covered in the column.

The presentation was about the film 102 Dalmations, which centers around the pursuit of a spot-free dalmation — which does not occur in nature — that used expensive and tedious digital painting to create the nearly albino dog. Due to the time factor, a procedural method was sought to improve the pipeline, and J.P. invented one. In front of the whole audience, he introduced the idea of decoupling high frequency detail from lower frequency image intensity, much like audio signal processing, in an advanced unSharp mask procedure. Rather than using filtration to remove noise, he identified fur detail as the “noise” and decoupled it from the image to protect it. At that point it became simple to use broader painting techniques to remove the unwanted spots from the animal, and restore the missing detail. The final images (seen in slides from the presentation) show how clean the results were.

Once more, magic.

The idea of literally flaying the image into different components, frequencies, and band-limited regions was introduced to a wider audience, and launched myriad detail transfer methods. I immediately began implementing them in existing software, and coding automated setups to build the composites. I prefer using existing software as a core rather than rely strictly on an optimized single coded tool. The technique has had the most impact on my programming and VFX methodology, leading me to develop several tools for multi-frequency sharpening, transfer, and color balancing methods for stereo IMAX films — all before 2004 (more on this in a future article). Since used for motion blur transfer, artifact repair, de-aging Leonard Nimoy and Jim Carrey (both in flashback sequences 14 -20 years prior to their actual age), and so much more. From the point of the talk, I began looking deeper into every solution, to find a procedural method to solve problems. J.P.’s work made me realize that many solutions to a problem sit directly within the plate, and just need to be found, or de-coded. The fruits of attending this one presentation extend forward to this day.

[editors note] It has come to my attention that Greg Apodaca, a photoshop artist in the 1990s may have originated this technique, but it is not necessarily the progenitor of Lewis’ method.

Lighting A Path (Point by Point)

J.P.’s work is only one of the important keystones in my methodology derived from SIGGRAPH presentations. Few people noticed a singular innovation in the 2002 film XXX with visual effects by Digital Domain, as it was not written down (as far as I can find). The DD team spoke voluminously at SIGGRAPH 2003 of their work creating a photorealistic digital double of the main actor for stunt scenes, as well as the fantastic particle simulation work for the avalanche (video of them here). What was most impressive to me were the VFX no one saw, which were absolutely necessary for the completion of the Avalanche effect — the method of terrain re-creation. Cinefex magazine reported the official method as “The geometry of the avalanche was based on the location plate terrain. We took the terrain model captured by the data integration team and re-created it using 3D Track or good old blood, sweat and tears.” Hidden in that sentence is one more of the evolutionary code-based solutions that spurred me into larger other solutions. It is the work of the lead programmer at Digital Domain, Doug Roble.

J.P.’s work is only one of the important keystones in my methodology derived from SIGGRAPH presentations. Few people noticed a singular innovation in the 2002 film XXX with visual effects by Digital Domain, as it was not written down (as far as I can find). The DD team spoke voluminously at SIGGRAPH 2003 of their work creating a photorealistic digital double of the main actor for stunt scenes, as well as the fantastic particle simulation work for the avalanche (video of them here). What was most impressive to me were the VFX no one saw, which were absolutely necessary for the completion of the Avalanche effect — the method of terrain re-creation. Cinefex magazine reported the official method as “The geometry of the avalanche was based on the location plate terrain. We took the terrain model captured by the data integration team and re-created it using 3D Track or good old blood, sweat and tears.” Hidden in that sentence is one more of the evolutionary code-based solutions that spurred me into larger other solutions. It is the work of the lead programmer at Digital Domain, Doug Roble.

During the presentation at the SIGGRAPH conference of the work on that film, Doug informed the audience of a methodology that was not covered in the notes for the presentation. Doug is widely known for his 3D tracking engine called “Track” Which gave Digital Domain early technical advantages — as well as several other innovations. By 2002 commercial 3D tracking software packages brought that advantage to everyone, and competed for market share, each with their own strengths. One package, named Boujou, used optical flow tracking to produce thousands of points of data from which a 3D camera was the result. The software would then recreate those points in 3D space.

During the presentation at the SIGGRAPH conference of the work on that film, Doug informed the audience of a methodology that was not covered in the notes for the presentation. Doug is widely known for his 3D tracking engine called “Track” Which gave Digital Domain early technical advantages — as well as several other innovations. By 2002 commercial 3D tracking software packages brought that advantage to everyone, and competed for market share, each with their own strengths. One package, named Boujou, used optical flow tracking to produce thousands of points of data from which a 3D camera was the result. The software would then recreate those points in 3D space.

This was a relatively new approach, using dense machine vision, as at the time most tracking engines used discreet tracked points, not optical flow algorithms. Boujou could create a dense sample field merely by hitting a button, and Mr. Roble reasoned that information should not go to waste. Using a least-means squared triangulation algorithm, he turned all those sample points into a low density mesh that (with a little cleanup) closely resembled the terrain from the helicopter plates. This geometry was more accurate than manually minimizing error through observation, in a brute force fight with polygons, and potentially cheaper due to the time savings. With cleaned-up polygon geometry the team could bounce avalanche particle systems all over the plate, and modify existing imagery with camera projection methods. Whether he originated this method or not, Doug’s ability to leap past the visceral reaction (“Oh cool, it looks like the terrain!”) and see camera solving data as points of a polygonal mesh was far more important to me, than digital actors, and volumetric particle systems — because I could implement some of those tracking ideas with what I had on-hand.

Moon Light

I employed the method in the 2005 stereo IMAX film Magnificent Desolation: Walking on the Moon In 3D. The movie was filmed with 3D cameras on sound stages in Culver City, with actors suspended on wires to simulate micro-gravity. To kick up dust from the Astronaut feet (as the moon is very dusty), and from the tires of the Lunar Rover, I used a similar method to Digital Domain’s to recreate the lunar surface. Lacking any specific triangulation algorithm, I manually built the surface of the set by snapping to recovered points derived from both cameras using Syntheyes software — a new package at the time.

Further following the lead of the DD team, my team used the geometry to intersect and bounce volumetric particle systems to simulate one-sixth Gravity. I asked the developer of the software if he could build in a triangulation method, which he happily did, though it did not arrive in enough time to be used on the show. As for any code written, I spent most of that building stereo compositing tools and writing particle systems, but the initial idea came from someone who “broke” the code as well as wrote it. The XXX movie could have survived with geometry that was close enough, but stereo filmmaking is unforgiving, and the photogrammetry approach from tracking was crucial, as we had to add perfectly connected set extensions.

I continued to use the track and triangulate procedure from the filmed footage throughout the project, but modified the approach for set extensions. Recreating geometry from the lunar photography of the Apollo missions relied on multiple images from several different locations around Hadley Rille taken by Astronaut David Scott, and James Irwin. Using a 70mm Hasselblad camera, they took thousands of photos, several of which were scanned at IMAX resolution for our use, and stitched together into panoramas. These panoramas were far enough apart, with enough parallax that it was possible to re-create a significant portion of the surface of the moon from sampled data, rather than guessing based on observation. This data served as a basis for turning the photos from the moon missions into 3D representations of the actual location, which were then blended into the movie set made out of concrete, dust, and foam — which replaced the areas that could not be solved via manual photogrammetry. Whatever could not be recreated was informed by the recovered geometry, and built through standard 3D modeling (Eric Hansen, and Paul Haman helped build the wide pull-back shot from this scant data and imagery.)

We sampled data as much as we could, to provide a realistic experience, in as historically accurate a way as possible — and for billions of dollars less than the original moon effort. This was long before the recent lunar missions mapped the surface of the moon, and provided terrain data, so this may have been one of the first attempts at recreating those terrains in such a manner. Astronaut Dave Scott’s positive reaction to the resulting converted imagery was almost reward enough. During the screening several notes were read to him from his mission logs, and questions asked if this was the EXACT spot which he stood at? “Who cares? THIS IS COOL!” If we can please one of the men who actually stood there, and re-ignite his memories of the experience — what more could one ask? The show went on to win the first Visual Effects Society Award for Large Format Visual effects, but that is a story for another time.

Since then I have used derivations of this technique to rebuild sets and actors, trying to negate the perspective influence of the lens, while rebuilding reality in the computer. It was also used to stereo convert large terrains in the first fully stereo converted film, Lions 3D in 2006. For that show I wrote software to convert Boujou data to Syntheyes, by which I could leverage both optical flow engines to build points before I triangulated in Syntheyes. Boujou had added their own triangulation engine, but at the time it was cheaper to move that data from the lower-end version of that software into Syntheyes for triangulation. As more powerful photogrammetry software comes into the world, the tools I wrote are used less, but Doug Roble’s (overlooked) little exploration has paid dividends to my software development, and visual effects approach.

Keep The Lights On

There are many solutions to problems and efficiencies available with nothing more than a meager knowledge of code writing, no matter which programming language you choose. It is an exercise in critical thinking, and problem solving that you might normally miss, if speaking the language of coders is foreign to you. Learning from the examples provided by others is the best way to evolve your skill set in the VFX industry. With proper motivation, it is possible to Improve your coding ability, CREATE the new solution, and inspire others in the process.

Unlock your mind (if only a little bit). All it takes is the right impetus.

The Annual SIGGRAPH conference is about to open, and the Co-located DigiPro conference. I have learned so much from many in this community of mathematicians and artists, and I encourage anyone reading this to attend if you have the means to do so. Do not be intimidated. Devour books and magazines on visual effects and programming like other people eat potato chips, but most of all:

Write something. Make something. Solve problems, and inspire others. There is a mystery in the challenge before you, so solve the puzzle, learn more, break the code, and share.

AG

Pingback: How We Faked the Moon Landing: Part 2 — the Visual Effects of Magnificent Desolation - AGRAPHA Productions